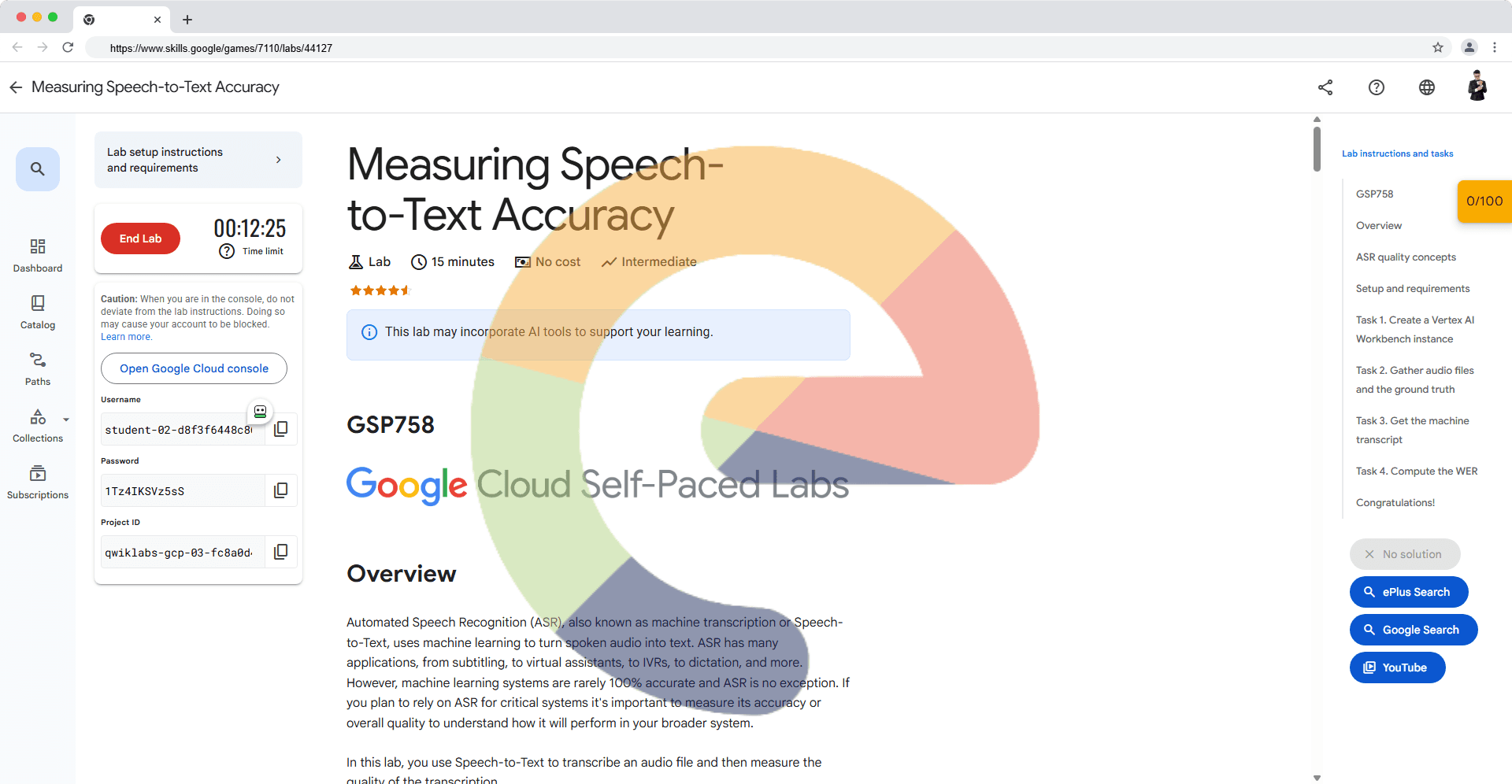

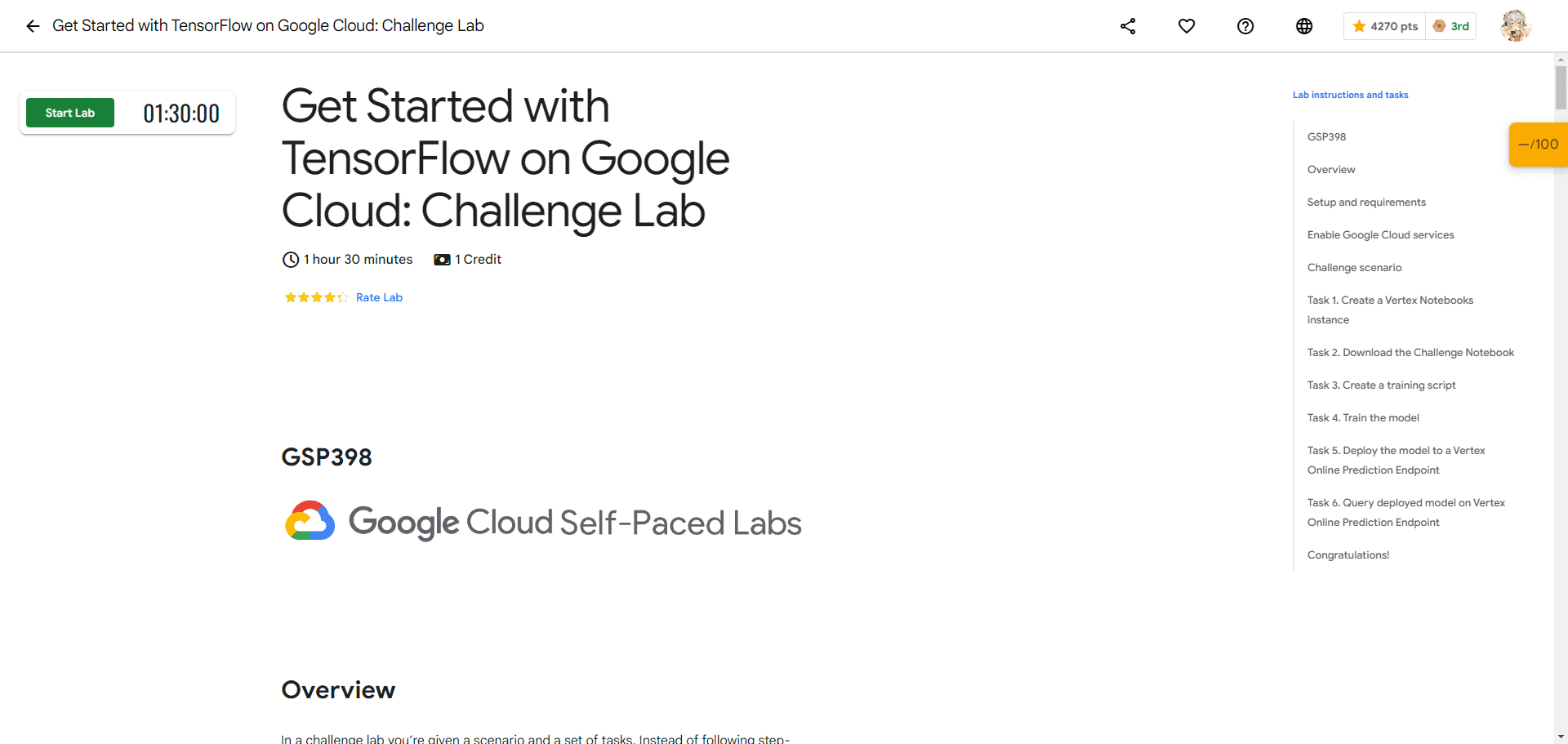

Get Started with TensorFlow on Google Cloud: Challenge Lab

A passionate full-stack developer from @ePlus.DEV

Overview

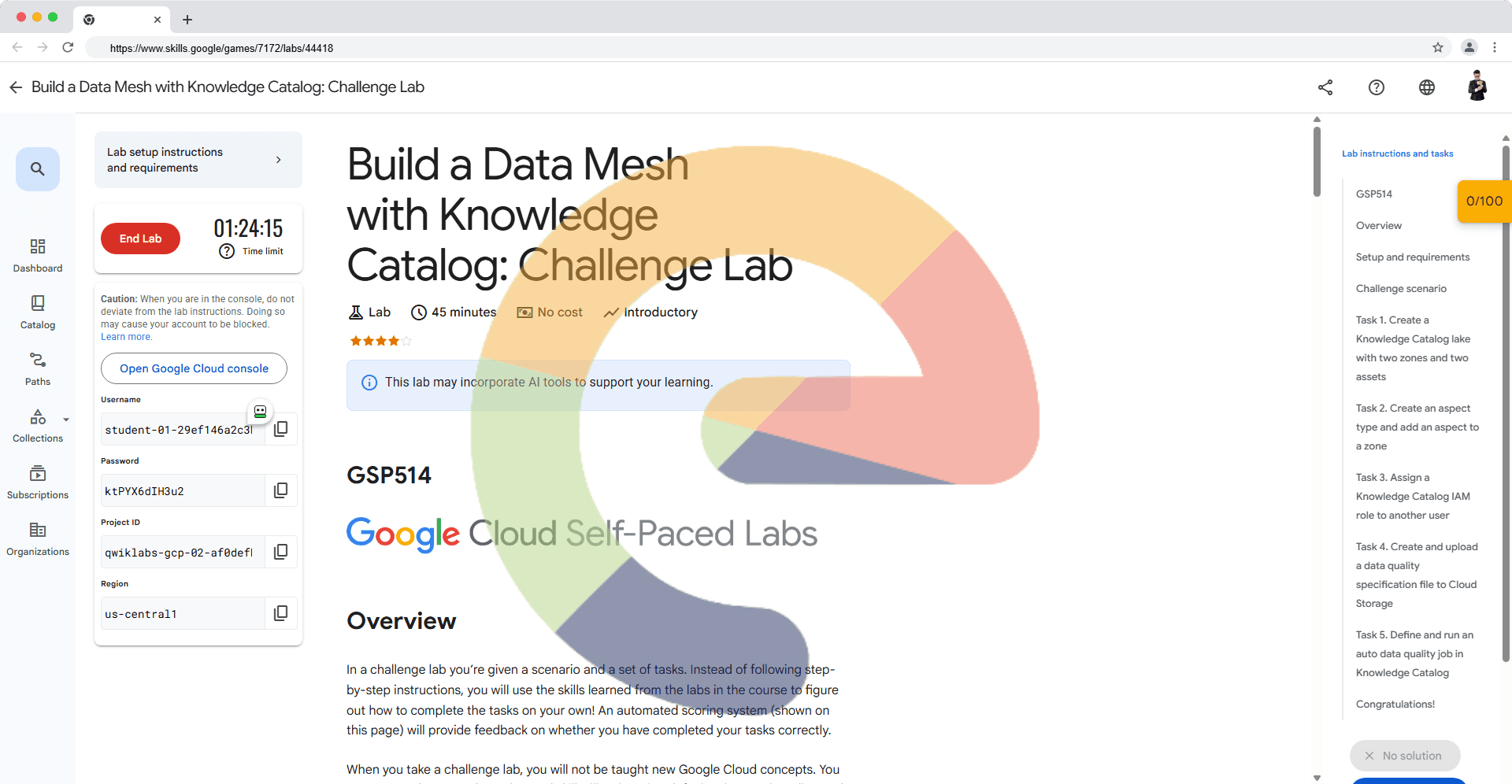

In a challenge lab you’re given a scenario and a set of tasks. Instead of following step-by-step instructions, you will use the skills learned from the labs in the course to figure out how to complete the tasks on your own! An automated scoring system (shown on this page) will provide feedback on whether you have completed your tasks correctly.

When you take a challenge lab, you will not be taught new Google Cloud concepts. You are expected to extend your learned skills, like changing default values and reading and researching error messages to fix your own mistakes.

To score 100% you must successfully complete all tasks within the time period!

This lab is recommended for students who have enrolled in the Get Started with TensorFlow on Google Cloud skill badge. Are you ready for the challenge?

Topics tested:

Write a script to train a CNN for image classification and saves the trained model to the specified directory.

Run your training script using Vertex AI custom training job.

Deploy your trained model to a Vertex Online Prediction Endpoint for serving predictions.

Request an online prediction and see the response.

Task 1. Create a Vertex Notebooks instance

Navigate to Vertex AI > Workbench > User-Managed Notebooks.

Create a Notebook instance. Select TensorFlow Enterprise 2.11*Without GPUs.* Name your notebook

cnn-challengeand leave the default configurations.Select region .

Click OPEN JUPYTERLAB next to the name of your pre-provisioned Vertex Notebook instance. It may take a few minutes for the OPEN JUPYTERLAB option to appear.

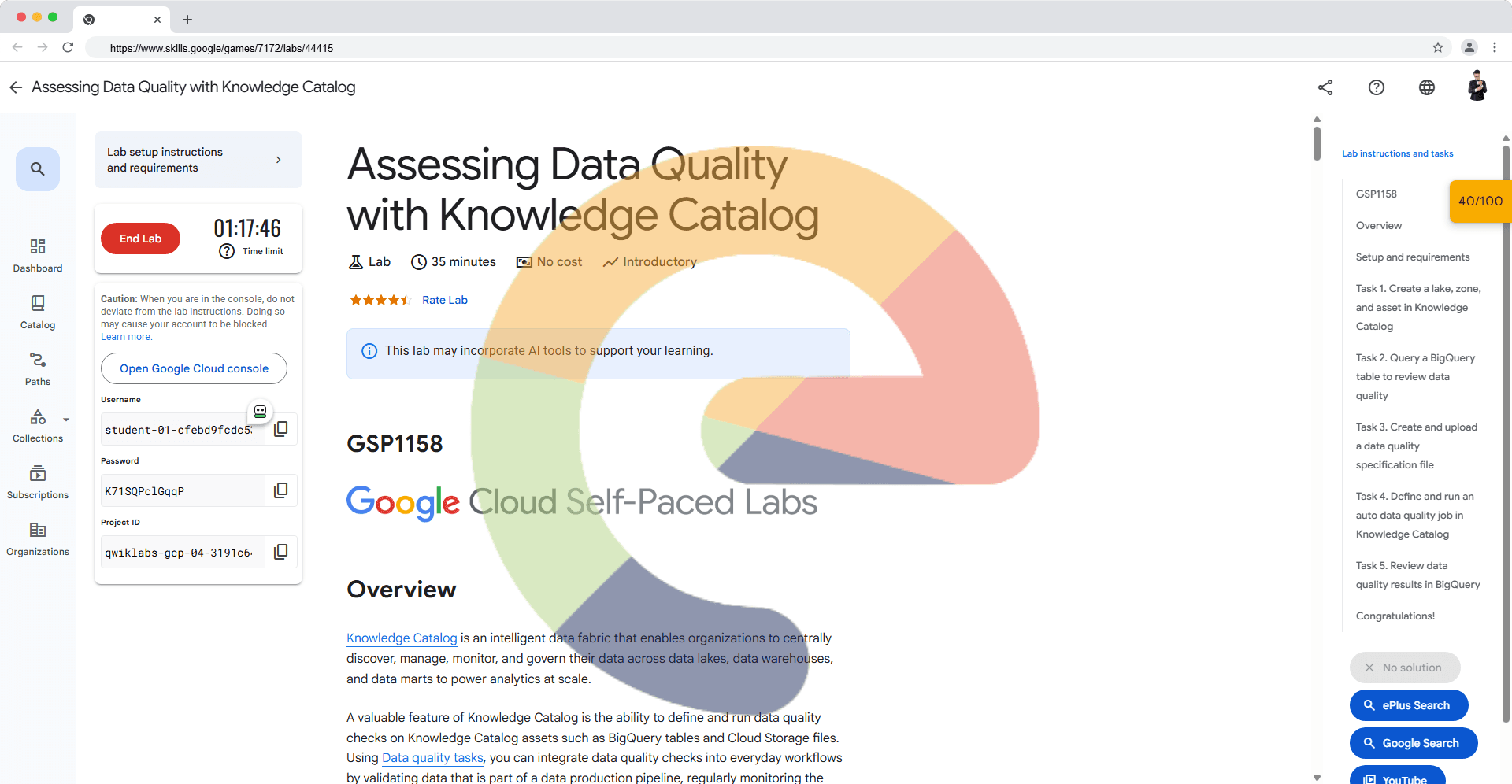

Task 2. Download the Challenge Notebook

# TODO: fill in PROJECT_ID.

if not os.getenv("IS_TESTING"):

# Get your Google Cloud project ID from gcloud

PROJECT_ID = "PROJECT ID"

# TODO: Create a globally unique Google Cloud Storage bucket name for artifact storage.

BUCKET_NAME = "gs://PROJECT ID"

# TODO: Write the last layer.

tf.keras.layers.Dense(10, activation=tf.nn.softmax)

Task 3. Create a training script

# TODO: Save your CNN classifier.

model.save(MODEL_DIR)

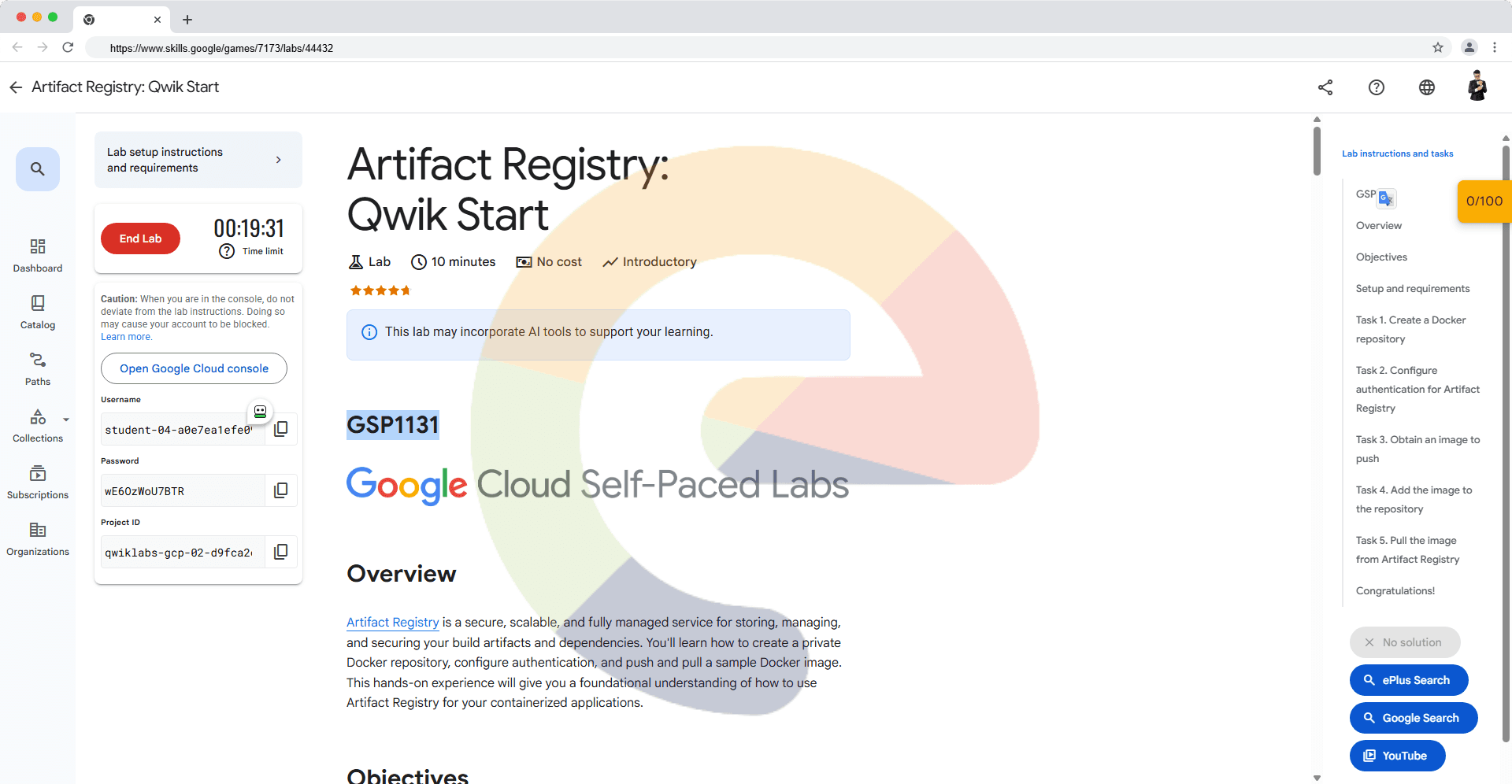

Task 4. Train the model

# TODO: fill in the remaining arguments for the CustomTrainingJob function.

job = aiplatform.CustomTrainingJob(

display_name=JOB_NAME,

requirements=["tensorflow_datasets==4.6.0"],

# TODO: fill in the remaining arguments for the CustomTrainingJob function.

container_uri=TRAIN_IMAGE,

script_path="task.py",

model_serving_container_image_uri=DEPLOY_IMAGE,

)

Task 5. Deploy the model to a Vertex Online Prediction Endpoint

# TODO: fill in the remaining arguments to run the custom training job function.

model = job.run(

model_display_name=MODEL_DISPLAY_NAME,

replica_count=1,

accelerator_count=0,

# TODO: fill in the remaining arguments to run the custom training job function.

args=CMDARGS,

machine_type="n1-standard-4",

)

# TODO: fill in the remaining arguments to deploy the model to an endpoint.

endpoint = model.deploy(

deployed_model_display_name=DEPLOYED_NAME,

accelerator_type=None,

accelerator_count=0,

# TODO: fill in the remaining arguments to deploy the model to an endpoint.

min_replica_count=MIN_NODES,

max_replica_count=MAX_NODES

)

Task 6. Query deployed model on Vertex Online Prediction Endpoint

# TODO: use your Endpoint to return prediction for your x_test.

predictions = endpoint.predict(instances=x_test.tolist())