Machine Learning Operations (MLOps) with Vertex AI: Model Evaluation - Quiz

A passionate full-stack developer from @ePlus.DEV

MLOps: Introduction to Model Evaluation Quiz

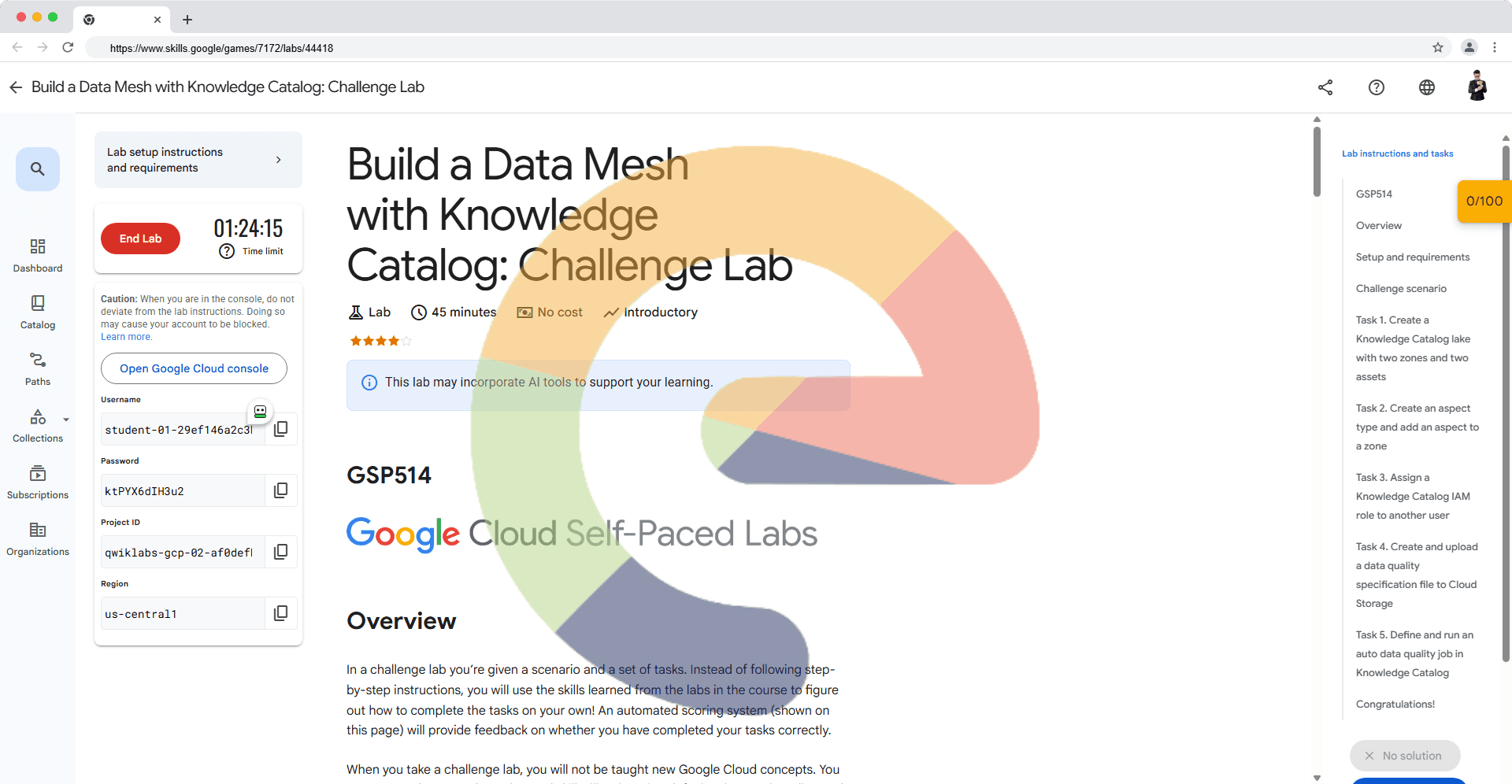

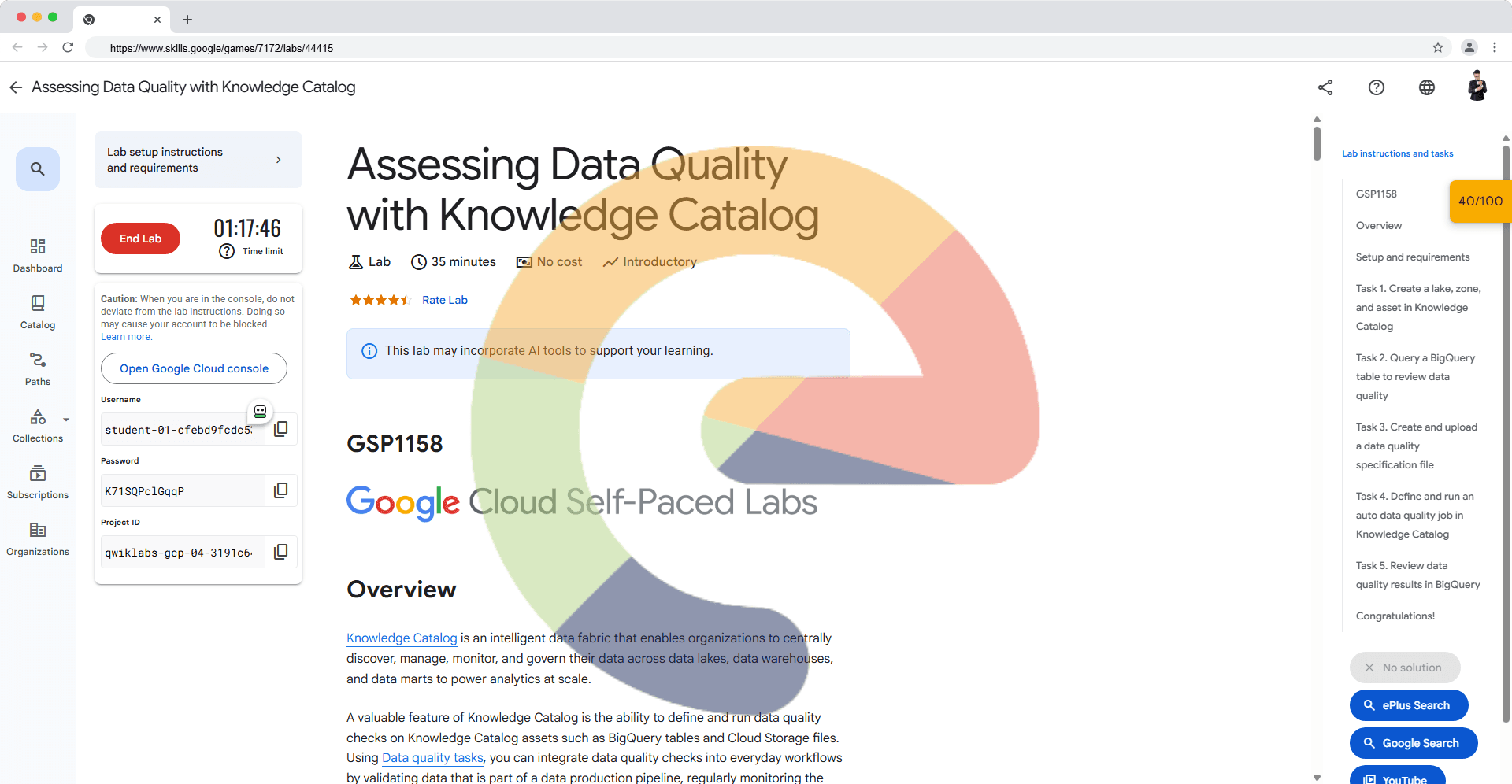

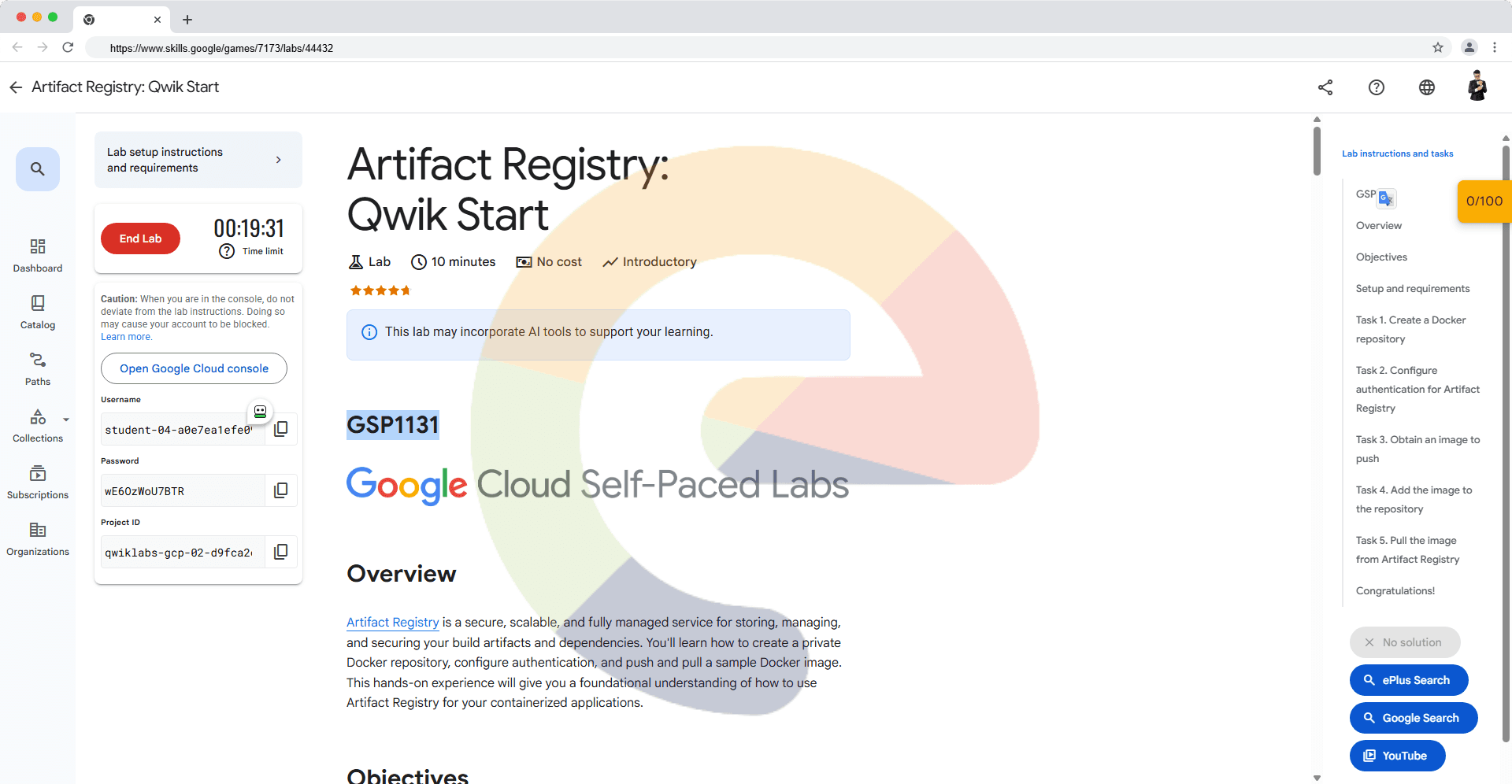

You're working on a machine learning project and need to evaluate your model's performance. Which of the following scenarios would benefit from using Vertex AI's model evaluation service? Select all that apply.

You need to monitor your deployed model's performance over time and detect potential issues like concept drift.

You want to get detailed feedback from users about the quality and relevance of your model's predictions.

You are concerned that your model may be overfitting to your training data and want to assess its performance on unseen data.

You have a large dataset and need to compare multiple model versions to find the best one.

An ML engineer is developing a customer churn prediction model. During model evaluation, they notice the model performs exceptionally well on the training data but poorly on new, unseen data. Which of the following concepts best describes this issue?

Overfitting

Generalization

Data shift

Bias-variance tradeoff

An ML engineer is working on a large-scale project that involves training multiple machine learning models. They are evaluating a new model and want to ensure it can adapt to changes in real-world data over time. Which of the following evaluation strategies should the engineer prioritize?

Leave-one-out cross-validation to assess the model's performance by leaving out each data point and retraining.

Holdout validation to assess the model's performance on a single, fixed dataset.

Cross-validation to assess the model's average performance across multiple data folds.

Continuous evaluation to monitor model performance on new data after deployment and retrain as needed.

A data science team is struggling to manage the lifecycle of their machine learning models from development to deployment. They face challenges with inconsistent model performance, difficulty tracking model versions, and a lack of collaboration between team members. Which of the following best describes how adopting an MLOps approach with Vertex AI could address these issues?

MLOps with Vertex AI would provide a structured framework for managing the entire ML lifecycle, promoting collaboration, enabling version control, and improving model performance consistency.

MLOps with Vertex AI would mainly focus on model evaluation, neglecting other crucial aspects like deployment and monitoring.

MLOps with Vertex AI would only help with model deployment, not addressing issues like inconsistent performance or collaboration.

MLOps with Vertex AI would primarily focus on automating model training, but wouldn't address versioning or collaboration challenges.

MLOps: Model Evaluation for Generative AI Quiz

You're tasked with evaluating multiple versions of a language model for summarizing news articles. You want to know which model produces the most informative and coherent summaries. Which evaluation type would be most appropriate?

Ranking evaluation: have human evaluators rank the summaries from different models based on their overall quality.

Multi-task evaluation: evaluate each model on additional tasks like question answering or text generation alongside summarization.

Numerical evaluation: calculate metrics like ROUGE or BLEU to measure the similarity between generated summaries and reference summaries.

Binary evaluation: assign a simple pass or fail judgment to each summary based on basic criteria.

An ML engineer is tasked with selecting the best-performing image classification model from three candidates, all trained on the same dataset. Their primary goal is to understand how each model performs in real-world scenarios and identify areas for potential improvement. Which evaluation approach would be most effective for this initial assessment?

Pointwise evaluation, focusing on the absolute performance of each model and identifying its strengths and weaknesses.

Pairwise evaluation, comparing the performance of each model against the others on specific tasks.

Multi-task evaluation, assessing each model's performance on a variety of image-related tasks beyond classification.

Binary evaluation, determining whether each model meets a pre-defined accuracy threshold.

You're evaluating a language model designed to generate creative stories. Which of the following evaluation approaches would be most relevant? Select all that apply.

Calculating the perplexity of the model's output.

Comparing the model's output to a standard grammar and syntax checker.

Assessing the diversity and originality of the generated stories.

Using BLEU score to measure similarity to reference stories.

When using evaluation methods like BLEU or ROUGE for LLM assessment, which of the following challenges is most likely to arise if the reference dataset is limited or biased?

The model may become vulnerable to adversarial attacks due to the insufficient diversity of the reference data.

The model may require more computational resources for training to compensate for the limited reference data.

The evaluation may overestimate the model's performance, as it's being compared against an artificially narrow set of outputs.

The evaluation may underestimate the model's true capabilities because the reference data doesn't cover the full range of acceptable responses.

Which component of an LLM block is responsible for storing and retrieving past interactions with the model to provide context for future requests?

Prompt templates

Data sources

Guardrails

Memory

During the evaluation of an LLM, you find that the model often produces responses that sound fluent and grammatical but are factually incorrect. Which of the following evaluation challenges does this example illustrate?

Data contamination

Lack of data

Limited reference data

Model complexity and decision-making

A company is using a generative AI model to write marketing copy. Which evaluation approach would help them ensure that the generated content is both creative and relevant to their target audience?

Relying solely on human evaluation for a qualitative assessment.

Using only automated metrics like BLEU and ROUGE.

Combining automated metrics for diversity and relevance with human evaluation for creativity and brand alignment.

Focusing only on grammar and syntax checks to ensure accuracy.